Benchmarking Pizza Ordering AI Agents

Overview

The promise of AI order agents in pizzerias is huge: streamlining ordering processes, offloading work from staff, and boosting overall profit margins.

However, pizza orders, with their high-level of customization, complex menu offerings, and lack of standardization across pizzerias present the biggest challenge for AI within the food sector.

Benchmarking is more than a buzzword; it’s a vital tool in the AI industry to constantly improve our products. Despite the rapid growth in AI adoption, there is no current benchmark defined for AI order agents.

In response, we’ve developed an industry benchmark to share key findings and insights, helping the entire industry move toward better user experiences and greater business efficiency.

What defines a great customer experience when ordering pizza? Order accuracy is crucial—mistakes frustrate customers, lead to negative reviews, and increase refund requests, ultimately impacting profit margins.

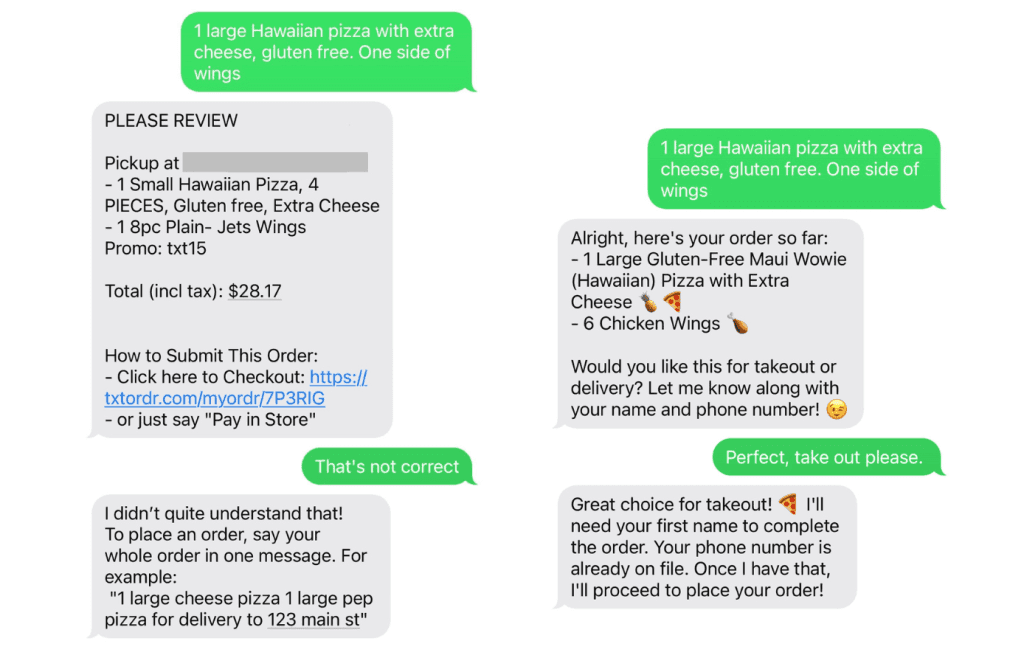

Here’s an example of different customer experiences:

Subpar customer experience:

Mistake in order item

Rigid ordering; not humanlike

Limited language understanding

Good customer experience:

Accurate order

Human and friendly; upsells

Brings out brand voice; delightful

For the rest of this blog, we explore in-depth the process, methodology, and results of our benchmark study.

2. Benchmark Design

The most fundamental (and most challenging) capability for AI agents is accurately processing highly customized pizza orders.

To highlight this, we conducted our benchmark using variations of food items—pizzas, salads, drinks, and sides—and pizza toppings like extra cheese, mushrooms, and Create Your Own (CYO) combinations.

2.1 General Cases

Since each AI order agent was designed for different pizzerias with unique menus, we first conducted a general case study to ensure accuracy. Using placeholders like [signature pizza] for pizzas, toppings, salads, and drinks, the generated test cases remained broad enough to provide meaningful benchmark results across all pizzerias.

We structured our evaluation into two main categories:

Basic categories (1–5): Variations included:

Single vs. multiple food types (e.g., only pizza vs. pizza and a salad)

Quantity and size differences

Whether the customer customized their order

Advanced categories (1.5–5.5): Included additional complexity, with the 0.5 levels representing topping customizations.

Table 1: Categories of Order Details

Category ID | Single or multiple food types | Single or multiple food items | Did the customer change their order? | Customizing toppings |

1 / 1.5 | single | single | No | No / Yes |

2 / 2.5 | single | multiple | No | No / Yes |

3 / 3.5 | multiple | single | No | No / Yes |

4 / 4.5 | multiple | multiple | No | No / Yes |

5 / 5.5 | multiple | multiple | Yes | No / Yes |

For each category, we prompted GPT-4o to generate general test cases, each containing:

The ordering objectives corresponding to that category

Simulated user queries that would lead to successful order fulfillment

For example, in Category 5.5, which represents the most advanced and complex orders, we provided the following inputs along with the expected accurate outputs of a successful AI order agent:

Figure 1. A sample generalized test case for Category 5.5.

2.2 Customized Cases

While we kept the generated test cases as general as possible, we also customized them to reflect the specific menus of each pizzeria for accurate benchmarking. To achieve this, we created a mapping from the placeholders to the actual food items on the pizzeria’s menu.

For example, for Pizza My Heart, [signature pizza] is mapped to the ‘Big Sur’ pizza, [vegetarian pizza] is mapped to the ‘Virgin Creek’ pizza, etc.

Figure 2. A sample customized test case for Category 5.5, to PMH.

2.3 Benchmarked Agents

We then benchmarked different industry-leading solutions. Table 2 provides a list of the agents being evaluated.

Table 2. The benchmarked agents

Agent | Pizzeria | Modality | Example phone number(s) |

Jimmy by Palona.ai | Pizza My Heart (PMH) | Voice, Text | +1-855-376-7010 |

ConverseNow | Domino's Pizza (Domino’s) | Voice | +1-815-722-3313 |

Soundhound - 1 | Blaze Pizza (Blaze) | Voice | +1-585-775-0735 |

Soundhound - 2 | California Pizza Kitchen (CPK) | Voice | +1-310-275-1101 |

OrderAI by HungerRush | Jet’s Pizza | Text | +1-313-274-2600 |

Pizzavoice by voiceplug.ai | Rusty’s Pizza | Voice | +1-805-564-1111 |

3. Benchmark Results and Analysis

3.1 Ordering Accuracy

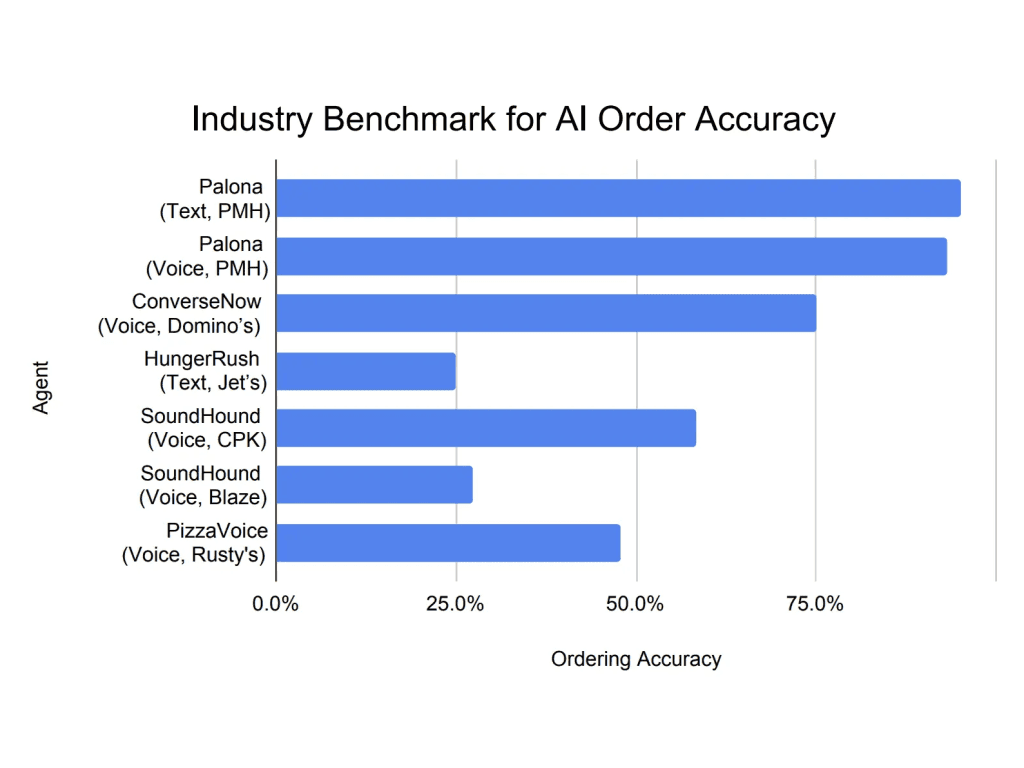

Below, we report the ordering accuracy of different agents based on our benchmark dataset.

The formula we used to calculate accuracy is:

Acc = ∑ Acc[i] / N,

Where Acc[i] is the accuracy of each test case (i). If the final order matches the expected output, the result is 1 (correct); otherwise, it’s 0 (incorrect).

The sum (∑) of all individual test results is divided by N, the total number of tests run. This gives Acc, the average accuracy across all test cases, representing the overall benchmark accuracy.

Here are the results from earlier:

Figure 3. Ordering accuracy of the agents.

Our agent, Jimmy, achieves state-of-the-art accuracy between 93.3% and 95.0%. This performance surpassed the second-best on-the-market product, with only 75.0% accuracy.

The reason behind Jimmy’s accuracy is his underlying design to handle all possible customizations of pizza toppings and sauces, as well as diverse combinations of pizzas and other food items.

Jimmy maintains a structured conversational memory, allowing him to track user preferences, customizations, and past interactions in real-time.

Jimmy is equipped with a dynamic knowledge base that includes detailed information about menu items, available customizations, and restaurant policies. This allows him to validate user requests instantly.

Unlike reactive AI agents that wait for explicit user corrections, Jimmy proactively detects ambiguous or incomplete requests and seeks clarification.

Ordering pizza with Jimmy isn’t just about efficiency—it’s about vibes. Jimmy represents Pizza My Heart’s 90s surfer persona for a fun, conversational experience.

3.2 Failure Analysis

To identify areas of improvement for each agent, we analyzed the test results and summarized the common reasons for failure.

Palona.ai for PMH:

Suboptimal voice recognition. Jimmy was mostly successful in recognizing orders. Only a few instances where voice recognition failed due to accents; for example, the "vegan big sur" pizza was sometimes misidentified due to pronunciation.

ConverseNow for Domino’s Pizza:

Can’t process complicated & customized orders. The agent couldn’t modify the quantity or toppings of pizzas already in the cart. While it performed well in straightforward scenarios, it struggled with more intricate requests.

HungerRush for Jet’s Pizza:

No support for multi-line conversation. The texting agent requires all order items in one message and can’t process multiple requests.

No support for multiple toppings. Even after inputting the one-block order, the agent could not deal with more than one topping customization. For example, when a customer asked to add “a pepperoni pizza with extra cheese and olives”, the agent didn’t include the pepperoni.

SoundHound for CPK:

Poor cart management. This agent performed well in recognizing the order details. However, its cart management failed in cases with medium to high complexity. For instance, it can’t process a user’s request to customize a pizza already in the cart. It also sometimes falsely swapped a pizza in the cart with a new pizza the user asked to add into the cart.

SoundHound for Blaze Pizza:

No support for topping customization. Half of the test cases failed because the agent couldn’t customize toppings.

Overly sensitive escalation. The agent kept attempting to transfer the call to a human representative, especially when some out-of-menu items were requested by the participant.

PizzaVoice for Rusty’s Pizza:

Incomplete menu support. This agent had limited support on menu items such as salads, sides, and desserts. For example, if the user included a salad, the agent was unable to handle it and would transfer the call to a human representative.

Suboptimal topping management. The ‘x2’ option for pizza toppings on Rusty’s menu wasn’t supported. For example, requesting extra mushrooms on Rusty’s Special failed, as the agent didn’t recognize mushrooms as an extra topping.

Conclusion

Pizza ordering may seem simple, but with the high-level customizations, order adjustments, and real-world requests push AI ordering systems to their limits. Inaccurate orders will lead to frustrated customers, operational headaches, and potentially, lost revenue.

As new AI technology develops every day, we’ll continuously update our benchmark to hold ourselves, and the entire industry up to standard.